Overview

This post examines the classical algebraic theory of determinants through the lens of Sylvester’s Determinantal Identity (Sylvester, 1851) and the Schweinsian Expansion [@schweins1825theorie]. These results provide the foundational framework for modern fraction-free algorithms, recursive least squares, and sequence acceleration techniques. We explore their derivation via Schur complements and their implications for numerical analysis.

🏷️ Sylvester’s Determinantal Identity

Sylvester’s identity is a fundamental result in multilinear algebra that relates the determinant of a large matrix to the determinants of its “bordered” minors. It serves as a bridge between local submatrix properties and global determinantal invariants.

The Formal Statement

Let and let be its leading principal submatrix. For indices such that , define the bordered minor as:

where is the -th column of the top-right block and is the -th row of the bottom-left block.

Sylvester’s Identity states that the determinant of the matrix formed by these minors is proportional to the original determinant:

Proof via Schur Complements

The most insightful proof of this identity utilizes the Schur Complement. Consider the block decomposition of into a leading principal submatrix and its surrounding blocks:

By the fundamental property of block matrices, the determinant admits the factorization , where is the Schur complement of in .

A crucial observation is that the entries of the Schur complement correspond precisely to the normalized bordered minors. Specifically, for indices , the -th entry is given by . By applying Cramer’s rule to the augmented system , we find that:

Upon substituting this entry-wise expression into the determinant of the Schur complement, we obtain:

Since the matrix of bordered minors is of order , the scalar factor is raised to the -th power when pulled out of the determinant. This leads to the identity:

Rearranging the terms yields the final result: . ▮

🏷️ The Schweinsian Expansion

While Sylvester’s identity provides a “local” update rule for determinants, the Schweinsian Expansion [@schweins1825theorie] provides a “global” series for the ratio of two determinants. This is critical in applications where a basis is expanded iteratively, such as in polynomial interpolation or recursive filtering.

Schweins' Identity

Let denote the determinant . For a vector , the difference between ratios of successive orders is given by:

Proof of Schweins' Identity

The proof of this identity is a masterful application of Sylvester’s Determinantal Identity to a specifically constructed composite determinant.

Consider the determinant of the matrix formed by the columns . By applying Sylvester’s identity with the leading principal submatrix , we can express the global determinant in terms of its -th order condensation.

Specifically, let be a matrix where we have appended the vector as an additional column and row. By analyzing the difference of the ratios:

We can combine these terms over a common denominator . The numerator of the resulting fraction becomes:

This expression is precisely the expansion of the Desnanot-Jacobi identity (the case of Sylvester’s Identity) applied to the matrix . Identifying the minors:

- corresponds to

- corresponds to

The identity reveals that this numerator is equal to the product . Dividing by the common denominator yields the Schweinsian result. ▮

🏷️ Insights into the Schweinsian Series

By summing these differences telescopically, we obtain the full Schweinsian Series:

Architectural Intuition

- Discrete Taylor Series: The expansion can be viewed as a discrete analog of a Taylor series for determinantal quotients.

- Interpolation Link: This identity is the algebraic core of the Aitken-Neville algorithm.

- Connection to Divided Differences: In the limit, these ratios converge to Divided Differences, linking classical linear algebra directly to numerical approximation theory.

🏷️ Numerical Stability and Exact Computing

The professional significance of these identities lies in their application to Exact Integer Arithmetic.

- The Bareiss Algorithm: This algorithm uses Sylvester’s identity (Bareiss, 1968) to perform Gaussian elimination without introducing fractions. By keeping calculations within the ring of integers, it eliminates rounding errors and minimizes expression swell in symbolic computation.

- Convergence Acceleration: In the theory of Padé approximants, the -algorithm uses these determinantal updates to transform slowly converging sequences into rapidly converging ones. This shares the “spectral tail” logic discussed in on improved Nyström bounds.

🏷️ Application: Exact Linear Solvers

The utility of the Bareiss algorithm extends beyond determinants to the resolution of linear systems where and have integer or rational entries.

Solving without Precision Loss

- Fraction-Free Systems: Bareiss uses Sylvester’s Identity to perform divisions that are guaranteed to have no remainder, keeping intermediate coefficients as small as possible.

- Cramer’s Rule Efficiency: The Bareiss algorithm reduces complexity to by performing fraction-free elimination on the augmented matrix .

- Algebraic Robustness: For ill-conditioned systems, the Bareiss approach provides the exact rational solution, avoiding ghost singularities caused by numerical underflow.

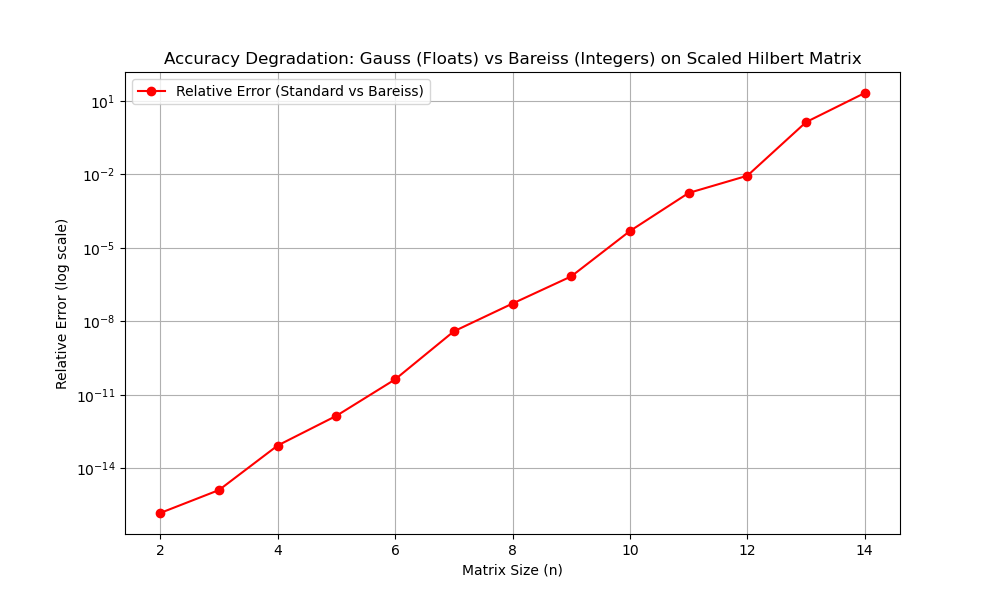

📊 Numerical Verification

To demonstrate the practical utility of Sylvester’s Identity, we compare the Bareiss Algorithm (fraction-free elimination) against standard floating-point Gaussian elimination on a scaled Hilbert Matrix (see content/codes/2024 Spring/bareiss_algorithm.py).

Exact Computing vs. Precision Loss

1. The Benchmark: We use a Hilbert matrix (), which is famously ill-conditioned.

2. The Bareiss Mechanism: The Bareiss algorithm uses the update:

where the division is guaranteed to have a zero remainder.

3. The Results:

- Bareiss (Exact): Produces the mathematically true determinant.

- Standard Gauss (Approximate): Uses 64-bit floating point numbers.

🏷️ Notes

- The “condensation” approach inherent in these identities is a precursor to modern discretization methods seen in on convergence of graph Laplacian to manifold’s Laplacian.

- The Schur complement perspective used in the proof is a recurring theme in the stability analysis of on eigenvalue estimate of kernel.

🔗 See Also

- on improved Nyström bounds --- Sylvester’s identity provides the algebraic foundation for the determinantal ratios and recursive updates used in spectral approximation.